Google catches first zero-day exploit built with AI assistance

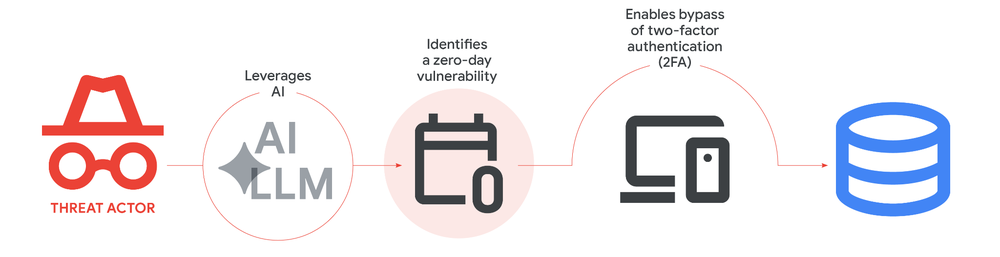

- Google found the first known zero-day exploit it believes was built using AI.

- The exploit targets two-factor authentication (2FA) on an open-source admin tool.

- State sponsored hackers from China and North Korea are actively using AI models for vulnerability research and exploit development.

Google’s Threat Intelligence Group said Sunday it caught what it believes is the first zero-day exploit built with help from an AI model.

A criminal hacking group wrote it as a Python script to bypass two-factor authentication (2FA) in an open-source web admin tool, according to a report Google published on its Cloud Blog. The company worked with the vendor to stop mass exploitation before it started.

Google linked the exploit to AI through code patterns

Google didn’t blame its own Gemini model. Analysts pointed to structural patterns in the code that strongly suggest AI involvement.

“Based on the structure and content of these exploits, we have high confidence that the actor likely leveraged an AI model to support the discovery and weaponization of this vulnerability,” Google wrote.

The Python script had unusually detailed educational docstrings, a hallucinated CVSS severity score, and formatting typical of large language model output.

That includes structured help menus and a clean color class written in textbook style.

Google hasn’t named the hacking group or the specific tool that was targeted.

State backed hackers use AI models for vulnerability research

Google’s report goes beyond the single zero-day case.

China and North Korea linked hackers have shown a strong interest in using AI to find and take advantage of software flaws, according to Google’s Threat Intelligence Group.

A Chinese threat group known as UNC2814 attacks telecom and government targets. The group used a technique Google calls persona-driven jailbreaking.

The group instructed an AI model to behave as a senior security auditor, then directed it to analyze embedded device firmware from TP-Link and Odette File Transfer Protocol implementations for remote code execution vulnerabilities.

The group prompted an AI model to act as a senior security auditor, then directed it to search TP-Link embedded device firmware and Odette File Transfer Protocol implementations for remote code execution vulnerabilities.

A different group with ties to China used tools called Strix and Hexstrike to attack a Japanese tech firm and a major East Asian cybersecurity company.

North Korean group APT45 took a different approach. It sent thousands of repetitive prompts to recursively analyze known CVE entries and validate proof-of-concept exploits.

Google said this method produced “a more robust arsenal of exploit capabilities that would be impractical to manage without AI assistance.”

AI enables new forms of malware and evasion

The Google report covers other AI threats beyond vulnerability research.

Suspected Russian hackers have used AI to code and build polymorphic malware and obfuscation networks. That malware accelerates development cycles and helps them evade detection.

Google also warned about a type of malware it calls PROMPTSPY, which it described as a change toward autonomous attack operations. The malware uses AI models to interpret system states and dynamically generate commands to manipulate victim environments. Attackers can hand off operational decisions to the model itself.

Threat actors now procure anonymized premium-tier access to language models via specialized middleware and automated account registration systems. These services enable hackers to circumvent usage restrictions en masse by utilizing trial accounts to finance their activities.

A group Google tracks as TeamPCP, also known as UNC6780, has begun targeting AI software dependencies as an entry point into broader networks. They use compromised AI tools as a foothold for ransomware deployment and extortion.

Google said it uses its own AI tools defensively. The company referenced Big Sleep, an AI agent that identifies software vulnerabilities, and CodeMender, which uses Gemini’s reasoning to automatically patch flaws.

Google also said it disables accounts caught misusing Gemini for malicious purposes.

Don’t just read crypto news. Understand it. Subscribe to our newsletter. It's free.

FAQs

What zero-day exploit did Google detect?

Google detected a Python-based exploit intended to circumvent 2FA on an open-source web admin tool. The company worked with the vendor to avert widespread exploitation prior to the occurrence of attacks. The exploit was developed by a criminal hacking organization and intended for deployment in a widespread exploitation operation.

Which hacking groups are using AI for cyberattacks?

Google's report uncovered activities by Chinese groups such as UNC2814, the North Korean group APT45, suspected Russia affiliated actors developing polymorphic malware, and a supply-chain-oriented group referred to as TeamPCP, also known as UNC6780.

How did Google determine the exploit was AI-generated?

Google analysts found structural indicators in the code, including detailed educational docstrings, a hallucinated CVSS score, and formatting patterns typical of LLM training data. The script had textbook style Python conventions and structured help menus that are typical of AI output.

Disclaimer. The information provided is not trading advice. Cryptopolitan.com holds no liability for any investments made based on the information provided on this page. We strongly recommend independent research and/or consultation with a qualified professional before making any investment decisions.

CRASH COURSE

- Which cryptocurrencies can make you money

- How to boost your security with a wallet (and which ones are actually worth using)

- Little-known investment strategies that the pros use

- How to get started investing in crypto (which exchanges to use, the best crypto to buy etc)