Rising Concerns Over AI Impact on Elections in 2024

- AI could affect the 2024 elections, but limitations include a short-lived impact and the need for skilled operators.

- Protect democracy by limiting AI personalization, verifying online identity, and holding deceptive politicians accountable.

- Natural safeguards and proactive measures can defend elections and democracy from AI disinformation.

The world is gearing up for a whirlwind of elections in various countries, with at least 65 scheduled in 54 nations. These elections are expected to involve over two billion voters.

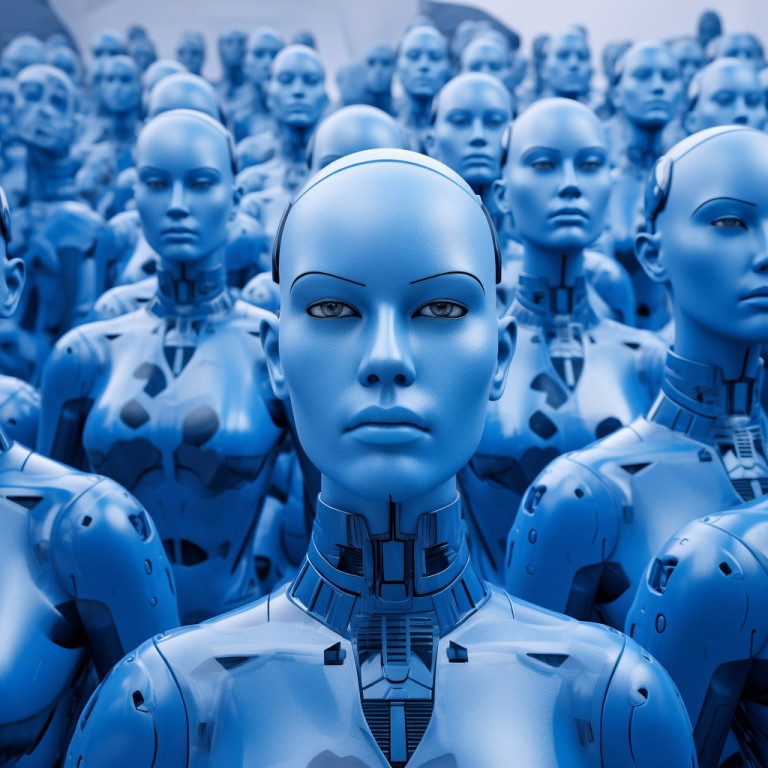

Amidst this democratic surge, concerns about the role of artificial intelligence (AI) in shaping electoral outcomes are mounting. While the potential impact of AI-generated disinformation is a cause for concern, several factors currently mitigate its influence.

AI-generated disinformation: A growing concern

In recent years, incidents have highlighted the potential risks of AI-generated disinformation. For instance, a deepfake video of Ukrainian President Volodymyr Zelensky allegedly declaring his country’s surrender, an AI-generated photo of an explosion at the Pentagon, and fake audio clips purporting to demonstrate election rigging have all circulated online.

These instances underscore the concern that AI could be harnessed to manipulate public perception during election cycles.

One reassuring aspect is the short-lived impact of most AI-generated disinformation. These fake narratives were quickly debunked in the cases mentioned and did not gain traction in authoritative news sources. Such disinformation must integrate itself into broader and more convincing narratives to be truly dangerous.

The need for skilled human operators

While AI can generate convincing content, it lacks the autonomy to create, spread, or sustain stories without skilled human operators. These individuals possess knowledge across politics, news, and distribution; their expertise is essential for crafting and disseminating persuasive narratives.

Just as many human political campaigns fail, AI-enabled ones are susceptible to shortcomings without the guidance of skilled individuals. Nevertheless, we should remain vigilant as AI’s capabilities continue to evolve.

The contemporary media landscape is fragmented, with users dispersing across various social networks and platforms. The rise of niche communities on platforms like Threads, BlueSky, and Mastodon, coupled with the diversification of Facebook users to Instagram and TikTok, further restricts the reach of disinformation.

This fragmentation poses challenges in detecting and explaining such phenomena but confines their impact on smaller corners of the internet.

Natural protection against AI disinformation

These three factors—the ephemeral nature of most AI-generated fakes, the need for alignment with larger narratives, and the fragmentation of the information landscape—provide some “natural” protections against the potential harm of AI in elections.

However, relying solely on these features is not enough. A proactive approach to safeguarding democracy and elections is imperative.

Excessive technological personalization in election campaigns, whether through AI, social media, or paid ads, should be curtailed. While voters seek to understand what politicians can offer individually, they also want transparency about policies offered to everyone.

Political campaigns should refrain from deploying thousands of AI agents to tailor messages to individual voters, as this could undermine the democratic process.

Ensuring that individuals are who they claim to be is crucial in the digital realm. While anonymity plays a role in voting, transparency about online identities is essential regarding electoral matters.

Trust is paramount, and citizens should have the means to verify the authenticity of online voices. AI-generated content should not be used to deceive or hide identities.

The role of social media platforms

As major conduits of information dissemination, social media platforms must actively contribute to combating AI-generated disinformation. While not all platforms may become significant players in generative AI, they still play a vital role in shaping public discourse. These platforms should help users identify AI-generated content, monitor and prevent large-scale manipulation of accounts and comments, curb coordinated AI harassment, and facilitate research and audits of their systems.

To confront the threat posed by AI-generated disinformation, society must take a collective stance in favor of democracy. Disinformation often emerges from influential figures, contributing to polarization and fragmentation.

As leaders set examples, society follows suit. Therefore, it is crucial to establish higher standards and unequivocally denounce politicians and campaigns that exploit AI to mislead citizens.

The smartest crypto minds already read our newsletter. Want in? Join them.

Disclaimer. The information provided is not trading advice. Cryptopolitan.com holds no liability for any investments made based on the information provided on this page. We strongly recommend independent research and/or consultation with a qualified professional before making any investment decisions.

Brian Koome

Brian Koome has over seven years of experience in blockchain and cryptocurrency reporting, having been active in the industry since 2017. He has contributed to leading publications, including BlockToday.com. Further, he developed the Ethereum 101 course for BitDegree.org before joining Cryptopolitan as a full-time writer. Brian covers evergreen guides (EGs), deep dives, interviews, and price analysis. His focus on DeFi, blockchain innovation, and emerging crypto projects delights readers. His Bachelor of Science degree from the Technical University of Mombasa equips him for decentralized finance, token economies, and institutional adoption trends.

CRASH COURSE

- Which cryptocurrencies can make you money

- How to boost your security with a wallet (and which ones are actually worth using)

- Little-known investment strategies that the pros use

- How to get started investing in crypto (which exchanges to use, the best crypto to buy etc)