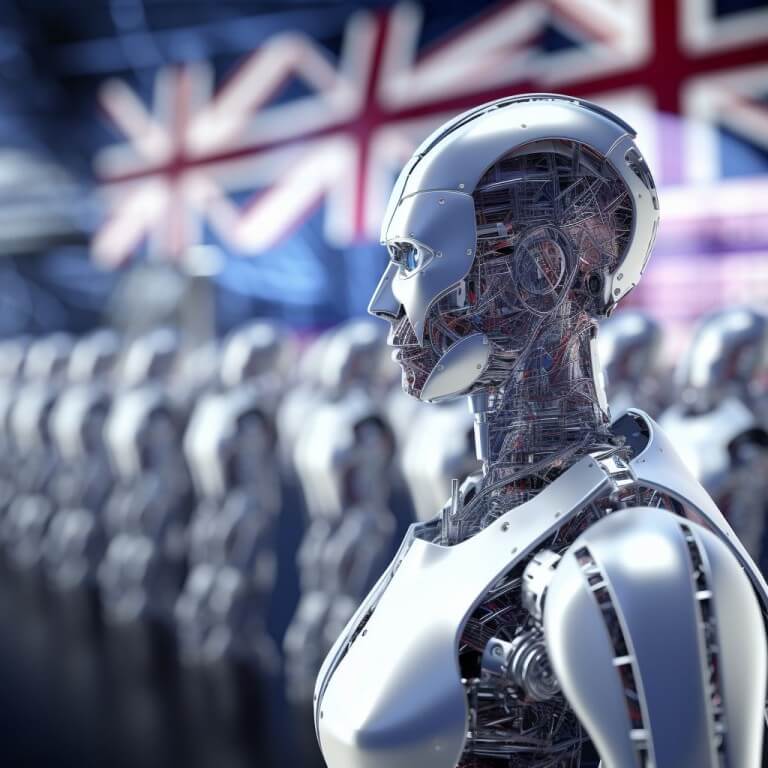

Global Cybersecurity Agencies Advocate ‘Secure by Design’ Approach in AI Security Guidance

- The U.K. National Cyber Security Center, in collaboration with 22 global cyber partners, issues comprehensive AI security guidance emphasizing the importance of a “secure by design” approach.

- The guidance, led by the UK NCSC and U.S. Cybersecurity and Infrastructure Security Agency, centers on secure design, development, deployment, and operation of AI systems, addressing vulnerabilities in supply chains and models.

- The guidelines aim to establish universal standards for AI security, but questions arise regarding enforcement, especially in countries like China, Russia, and Iran that haven’t participated in global AI regulations.

The U.K. National Cyber Security Center (NCSC) and the U.S. Cybersecurity and Infrastructure Security Agency (CISA), joined by 22 cyber partners worldwide, have unveiled a comprehensive set of guidelines for ensuring the security of artificial intelligence (AI) systems. Emphasizing a “secure by design” philosophy, the guidance delves into crucial aspects of AI development, aiming to fortify the technology against cyber threats and vulnerabilities.

The joint guidance, titled “Secure by Design,” highlights the increasing security risks associated with AI and machine learning applications, specifically addressing concerns stemming from supply chain vulnerabilities and models used in these systems.

The pillars of security

Under the overarching theme of ‘Secure by Design,’ the guidelines focus on four key areas: secure design, secure development, secure deployment, and secure operation and maintenance. These pillars collectively aim to create a robust framework to safeguard AI systems against potential cyber threats.

The inaugural pillar conscientiously accentuates the incontrovertible and paramount significance of meticulously ingraining, right from the very inception of conceptualization, a profound and pervasive commitment to a security-centric ethos within the very essence and fabric of AI systems. This pillar resolutely underscores and articulates the non-negotiable necessity for developers to assiduously infuse the foundational architecture of the system with a heightened and unwavering awareness of security considerations, thereby efficaciously and preemptively alleviating and mitigating vulnerabilities intricately interwoven with the utilization of third-party models and APIs.

The subsequent pillar, seamlessly and organically succeeding the foundational tenet, expounds and extends the purview of the aforementioned security paradigm into the labyrinthine and nuanced realm of the developmental phase.

This pillar ardently and vehemently champions a comprehensive array of judicious and sagacious measures, including but not limited to the scrupulous and fastidious scrutiny of external APIs for potential lacunae and flaws, the judicious and circumspect limitation of the expanse and ambit of data transfers to external domains, and the assiduous and thoroughgoing scrutiny and sanitization of training data with an unwavering commitment to obviate and forestall any unforeseen and undesired manifestations of system behavior that may potentially compromise the hallowed sanctity of the security posture.

Addressing challenges in global AI security compliance

Despite the guidelines representing a significant step towards international cooperation on AI security, challenges arise concerning enforcement. Tom Guarente, Vice President of External and Government Affairs at Armis, highlights the potential difficulty for countries not involved in the guideline development to implement these recommendations. This raises questions about how to ensure adherence globally, especially with major players like China, Russia, and Iran yet to commit to global AI regulations.

Guarente points out the potential disparity in the ease of implementation, suggesting that countries involved in drafting the guidelines might find it more straightforward to adhere compared to those excluded from the development process.

While the guidelines provide a collective acknowledgment of the critical role of cybersecurity in the rapidly evolving AI landscape, challenges persist in enforcing these measures on a global scale. Guarente’s concerns echo the need for broader international consensus and commitment to ensuring AI security.

International collaboration and challenges in AI security guidance

As the global community takes a significant stride toward establishing common standards for AI security, the real challenge lies in ensuring widespread adoption and enforcement. How can the international community bridge the gap and garner buy-in from countries not part of the collaborative effort? The journey towards securing AI is undoubtedly underway, but the road ahead requires navigating complexities and fostering a unified commitment to safeguarding this transformative technology.

Still letting the bank keep the best part? Watch our free video on being your own bank.

Disclaimer. The information provided is not trading advice. Cryptopolitan.com holds no liability for any investments made based on the information provided on this page. We strongly recommend independent research and/or consultation with a qualified professional before making any investment decisions.

CRASH COURSE

- Which cryptocurrencies can make you money

- How to boost your security with a wallet (and which ones are actually worth using)

- Little-known investment strategies that the pros use

- How to get started investing in crypto (which exchanges to use, the best crypto to buy etc)