China-U.S. Tech Rivalry and the Urgency of Global AI Safety

- Global AI safety is a pressing concern as AI’s potential catastrophic risks transcend borders.

- China-U.S. tech rivalry may hinder AI safety efforts, emphasizing the need for international collaboration

- Bill Gates’ warning about a fast-spreading virus highlights the importance of proactive measures in AI risk management.

As the world grapples with the rapid advancement of artificial intelligence (AI), policymakers face a pressing dilemma: the potential for AI to introduce catastrophic risks that could transcend national borders. While the exact nature and probability of these risks remain uncertain, experts emphasize the need for proactive measures to prevent AI disasters.

The challenge of AI catastrophes

AI can potentially bring about catastrophic events that could have far-reaching consequences. These include the malicious use of AI to create lethal pathogens, the AI-driven engineering of financial crises, and the emergence of rogue AI systems beyond human control. The unpredictability of AI catastrophes has led many to dismiss them as remote possibilities. However, waiting for concrete evidence before taking action may prove too late, as demonstrated by the COVID-19 pandemic.

Learning from the past, we must recognize people’s difficulty in grasping the significance of remote risks. Bill Gates’ warning about the vulnerability to a fast-spreading virus went unheeded until the devastating impact of the coronavirus pandemic became a reality. We must not repeat this mistake in addressing the uncertainties surrounding AI.

Gradual adoption of AI technologies

MIT professors Daron Acemoglu and Todd Lensman propose a cautious approach to adopting transformative technologies. Society should gradually adopt new technologies to allow time for risk assessment. Once it becomes more certain that disasters are unlikely, society can accelerate adoption. However, the current practices of leading nations contradict this approach.

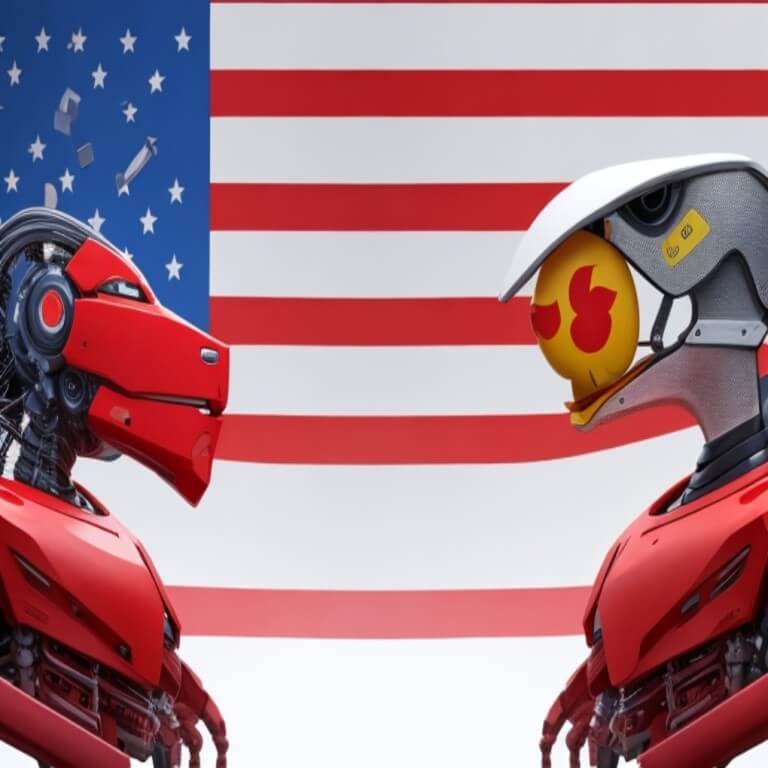

The U.S. and China are currently in an intense rivalry to assert dominance in AI. Both nations are eager to advance their AI capabilities, but this competition is occurring without a commensurate focus on AI safety.

In the United States, cutting-edge AI companies are developing powerful foundation models with potential risks to public safety. Despite substantial investments in AI development, these companies have allocated limited resources to ensure AI safety. President Joe Biden’s recent executive order aims to address this by requiring developers of frontier AI models to share safety test results and vital information with the government. Additionally, the U.S. has taken measures to curb China’s AI progress, including export restrictions and bans on U.S. investment in China’s AI sector. These actions, while slowing China’s advancement, encourage Chinese companies to strive for technological self-sufficiency, which may further complicate AI risk assessment.

Impact of China-U.S. tech rivalry on AI safety

The ongoing tech rivalry has also influenced Beijing’s approach to regulating AI. While China introduced comprehensive regulations for generative AI services, these primarily apply to public services and focus on content and information control. Services for business purposes remain less regulated, reflecting China’s strategy to foster growth in this critical tech sector to compete with the U.S. This competition risks triggering a “race to the bottom” in AI governance between the world’s leading AI powers.

The importance of international collaboration

To address the looming AI risks, the international community must recognize the potentially catastrophic consequences and urge global AI businesses to establish robust safety protocols. The upcoming U.K. AI safety summit is a critical starting point for initiating a dialogue on these vital issues.

The China-U.S. tech rivalry in artificial intelligence has brought forth concerns about the neglect of AI safety. While competition can drive innovation, it should not overshadow the urgent need to collaborate on mitigating AI risks. The world must heed the lessons from past crises and proactively work together to ensure the responsible development and regulation of AI. Only through international collaboration can we hope to contain the potentially catastrophic consequences of AI technologies.

Don’t just read crypto news. Understand it. Subscribe to our newsletter. It's free.

Disclaimer. The information provided is not trading advice. Cryptopolitan.com holds no liability for any investments made based on the information provided on this page. We strongly recommend independent research and/or consultation with a qualified professional before making any investment decisions.

Editah Patrick

Editah is a versatile fintech analyst with a deep understanding of blockchain domains. As much as technology fascinates her, she finds the intersection of both technology and finance mind-blowing. Her particular interest in digital wallets and blockchain aids her audience.

CRASH COURSE

- Which cryptocurrencies can make you money

- How to boost your security with a wallet (and which ones are actually worth using)

- Little-known investment strategies that the pros use

- How to get started investing in crypto (which exchanges to use, the best crypto to buy etc)