AI’s Accountability Crisis – OpenAI’s Leadership Struggle and the Moral Battle in Warfare

- AI’s use in military operations like in Gaza raises serious ethical concerns, particularly regarding civilian casualties.

- OpenAI’s internal management issues highlight the challenges in governing powerful AI technologies.

- There’s a growing need for effective regulation and oversight of AI to balance innovation with ethical responsibility.

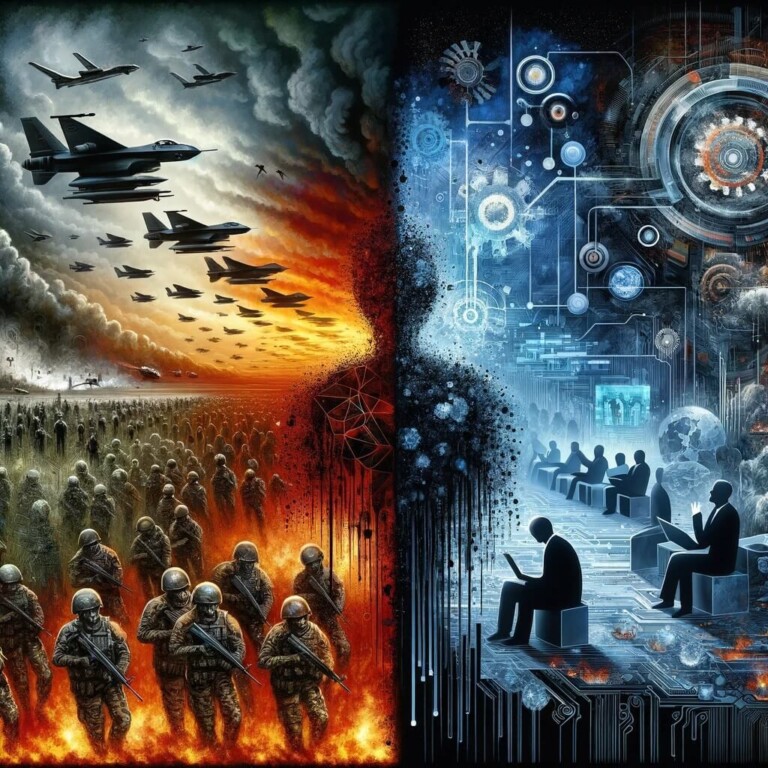

In an era where artificial intelligence’s (AI) impact on both warfare and corporate governance looms large, the ethical tightrope between innovation and accountability is more precarious than ever. The integration of AI in military operations has reached a critical juncture.

Recent conflicts, particularly in Gaza, spotlight the alarming use of AI in life-or-death decisions. Israeli military’s AI program, “The Gospel,” serves as a stark example. It accelerates target identification, whether correct or not, raising ethical concerns over the increased civilian casualties, including children. This escalation in AI-driven warfare necessitates an urgent reevaluation of the ethical boundaries and regulatory frameworks governing AI use in military contexts.

At the same time, the sudden removal of Sam Altman as chief executive at OpenAI sparked a wave of concern regarding the oversight and decision-making processes within a leading organization in the AI industry. This incident revealed deeper uncertainties about the intricacies of AI development and utilization, an issue that had been somewhat obscure even before these internal challenges came to light.

Globally, lawmakers grapple with the rapid evolution of AI technology. Their struggle to establish regulations and oversight mechanisms is evident. The pace of AI innovation far exceeds the development of legal and ethical guidelines. This gap poses significant risks, not only in military applications but across various sectors where AI is rapidly being adopted.

AI’s alarming toll on human lives in Gaza and OpenAI’s governance crisis

The implementation of AI in the Gaza conflict highlights a grim reality. AI systems, devoid of human empathy, are selecting targets. The resulting civilian casualties, including a disturbing number of children, paint a harrowing picture of AI’s potential misuse. This situation demands a critical examination of AI’s role in warfare, emphasizing the need for stringent ethical standards and human oversight in AI-driven military operations.

OpenAI, a leading AI research organization, recently faced significant governance turmoil. The unexpected dismissal of CEO Sam Altman has sparked discussions about transparency and control in AI development. This incident underscores the broader challenges of governing powerful AI entities. The lack of clarity and accountability in these organizations poses a risk not only to the ethical development of AI but also to public trust in these technologies.

A notable knowledge deficit in government bodies regarding AI compounds the challenge of regulation. This gap, coupled with bureaucratic complexities and concerns over hindering AI’s benefits, has led to a laissez-faire approach to AI governance. Consequently, AI companies, including those developing potentially transformative technologies, operate with minimal oversight. This unchecked growth of AI, especially in sensitive areas like military applications, is a significant concern.

Lessons from Gaza warfare – AI’s shortcomings or Israel’s willful misuse?

Militaries worldwide are closely observing the ruthless use of AI in Gaza, potentially setting a negative precedent for future conflicts. Does AI’s ethical lacking contribute to the global concern, or is it Israel’s willful use of AI for mass destruction? The lessons learned here could shape the development and deployment of AI in other military contexts. This scenario underscores the urgent need for an international dialogue on the ethical use of AI in warfare, with a focus on preventing civilian casualties and ensuring accountability.

The situation in Gaza and the governance issues at OpenAI mark a turning point. It’s a departure from the narrative that AI will unequivocally lead to a better world. Could concerns about AI misuse, including potential willful actions, alter this trajectory? The potential of AI to aid humanity in achieving unprecedented goals is undeniable. Yet, its development in secrecy and its application in life-threatening scenarios demand a more critical and ethical approach. Effective regulation and oversight are crucial to ensure AI’s positive impact on society.

The future of AI – A call for responsible innovation

As AI continues to evolve, its impact on society and warfare grows more profound. The current regulatory and ethical landscape lags behind this rapid development. Governments and international bodies must expedite the establishment of robust frameworks to govern AI. These frameworks should prioritize ethical considerations, transparency, and accountability, particularly in high-stakes applications like warfare. Only through responsible innovation can AI truly benefit humanity without causing irreparable harm.

AI’s dual role as a tool for progress and a potential instrument of destruction is now more evident than ever. The incidents in Gaza and at OpenAI serve as a wake-up call. They highlight the need for a balanced approach to AI development, one that acknowledges its benefits while critically addressing its risks. As AI continues to reshape our world, a collective effort is necessary to harness its potential responsibly, ensuring it serves the greater good without compromising ethical standards.

If you're reading this, you’re already ahead. Stay there with our newsletter.

Disclaimer. The information provided is not trading advice. Cryptopolitan.com holds no liability for any investments made based on the information provided on this page. We strongly recommend independent research and/or consultation with a qualified professional before making any investment decisions.

Aamir Sheikh

Aamir is a tech journalist with nearly six years of experience in the crypto and tech industries. He graduated from MAJ University with an MBA in Finance and Marketing. He now works with Cryptopolitan, where he reports on the latest developments in the cryptocurrency markets and price prediictions.

CRASH COURSE

- Which cryptocurrencies can make you money

- How to boost your security with a wallet (and which ones are actually worth using)

- Little-known investment strategies that the pros use

- How to get started investing in crypto (which exchanges to use, the best crypto to buy etc)